Photo: Deborah Rose/UVa

I am a research scientist at Intel Labs, doing research on Adversarial Machine learning. I earned my Ph.D. degree in Computer Science at the University of Virginia in May 2019, co-advised by Prof. David Evans and Prof. Yanjun Qi.

Previously I was an engineer at NISL, Tsinghua University.

Here's my Google Scholar profile.

Email: weilinuvagmail.com

Research

Feature Squeezing

NDSS 2018

Weilin Xu, David Evans, Yanjun Qi

We propose a new strategy, feature squeezing, that can be used to harden DNN models by detecting adversarial examples. Feature squeezing reduces the search space available to an adversary by coalescing similar samples that correspond to many different feature vectors in the original space into a single sample.

[ Paper ] [ Slides ] [ Code ] [ Website ]

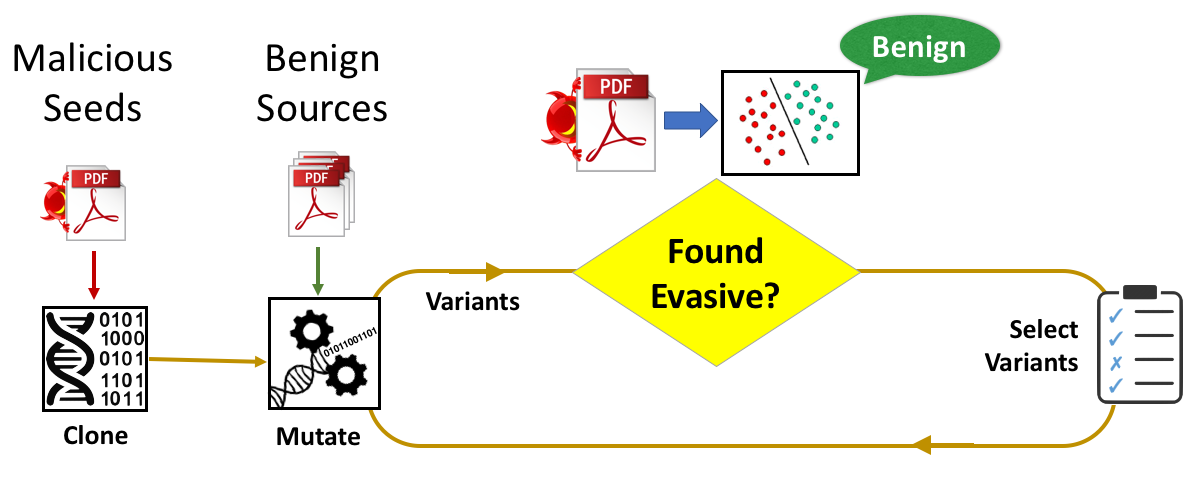

Automatically Evading Classifiers

NDSS 2016

Weilin Xu, Yanjun Qi, David Evans

Machine learning is widely used to develop classifiers for security tasks. However, the robustness of these methods against motivated adversaries is uncertain. In this work, we propose a generic method to evaluate the robustness of classifiers under attack. The key idea is to stochastically manipulate a malicious sample to find a variant that preserves the malicious behavior but is classified as benign by the classifier. We present a general approach to search for evasive variants and report on results from experiments.

[ Paper ] [ Slides ] [ Code ] [ Website ] [ 中文简介@inforsec.org ]

Invited Talks

Magic Tricks for Self-Driving Cars

On August 11, 2018, I gave a talk at the CAAD village of Defcon 2018 in Las Vegas about adversarial examples against object detection models. This work was conducted at Baidu X-Lab while I was an intern researcher in summer 2018.

[ Slides ]

Attack and Defense in Adversarial Machine Learning

In September 2017, I gave several invited talks on my research projects in China, respectively at Tsinghua University, Internet Security Conference 2017, Beijing University of Posts and Telecommunications, Baidu, Shanghai Tech University, and SangFor.

Teaching

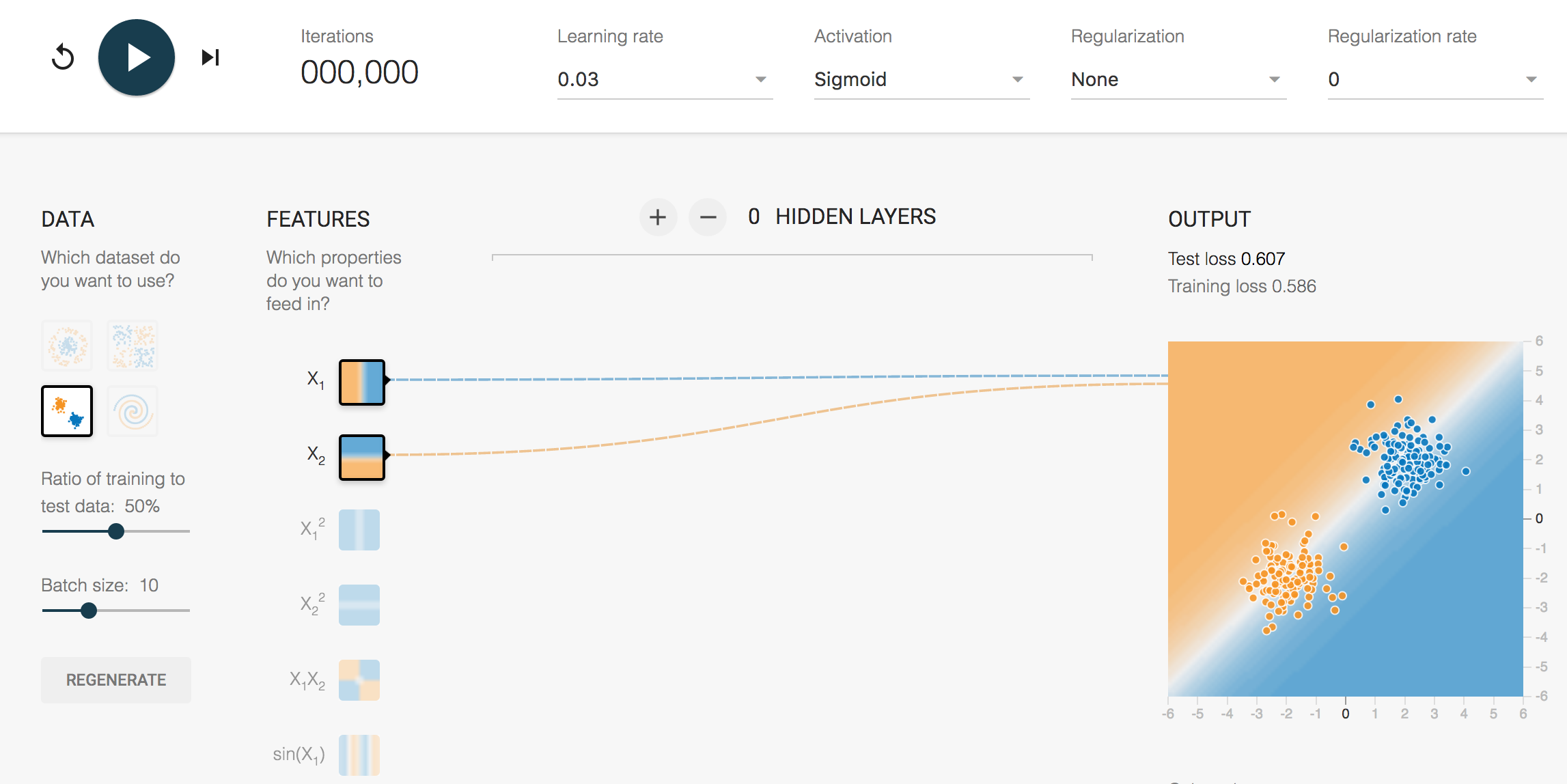

Neural Network Playground

TA for CS6316/4501, Fall 2016

We used a customized Tensorflow Playground in the machine learning course.

[ Note ] [ Playground@UVa ]

Rust Class

TA for CS4414, Fall 2013 and Spring 2014

I assisted Prof. David Evans in developing an undergraduate operating system course (focus on system programming), which is the first course to use the Rust programming language in the world.

[ Website ]

Fun Experience

Coming to America: how Google Summer of Code helped change my life

I was invited by Fyodor, the author of Nmap to write a post in the Google Open Source Blog introducing my exciting experience with the Google Summer of Code program.